| Current | v9 | v8 | v7 | v6 | v5 | v4 | v3 | v2 | v1 | All | About |

Performance Counter

Of the four entropy sources discussed so far, the performance counter is the only one that really presents a challenge. The purpose of the performance counter is to describe the total number of cycles that have executed. On the outside, the performance counter would seem impossible to reliably determine. After all, how could one possibly determine the precise number of cycles that had occurred as a cookie was being generated? The answer, of course, comes down to the fact that the performance counter itself is, for all intents and purposes, just another measure of time. Windows provides two interesting user-mode APIs that deal with the performance counter. The first, QueryPerformanceCounter, is used to ask the kernel to read the current value of the performance counter[8]. The result of this query is stored in the 64-bit output parameter that the caller provides. The second API is QueryPerformanceFrequency. This routine is interesting because it returns a value that describes the amount that the performance counter will change in one second[7]. Documentation indicates that the frequency cannot change while the system is booted.

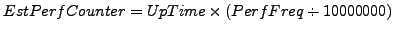

Using the existing knowledge about the uptime of the system and the calculation that can be performed to convert between the performance counter value and seconds, it is possible to fairly accurately guess what the performance counter was at the time that the cookie was generated. Granted, this method is more fuzzy than the previously described methods, as experimental results have shown a large degree of fluctuation in the lower 17 bits. Those results will be discussed in more detail in chapter 5. The actual equation that can be used to generate the estimated performance counter is to take the uptime, as measured in 100 nanosecond intervals, and multiply it by the performance frequency divided by 10000000, which converts the frequency from a measure of 1 second to 100 nanosecond:

In a fashion similar to tick count, a constant scaling factor of -165000 was determined through experimentation. This seems to produce more accurate results in some of the 24 low bits. Based on this calculation, it's possible to accurately determine the entire 32-bit high order integer and the first 15 bits of the 32-bit low order integer. Of course, if the system time estimate is wrong, then that directly effects this calculation.